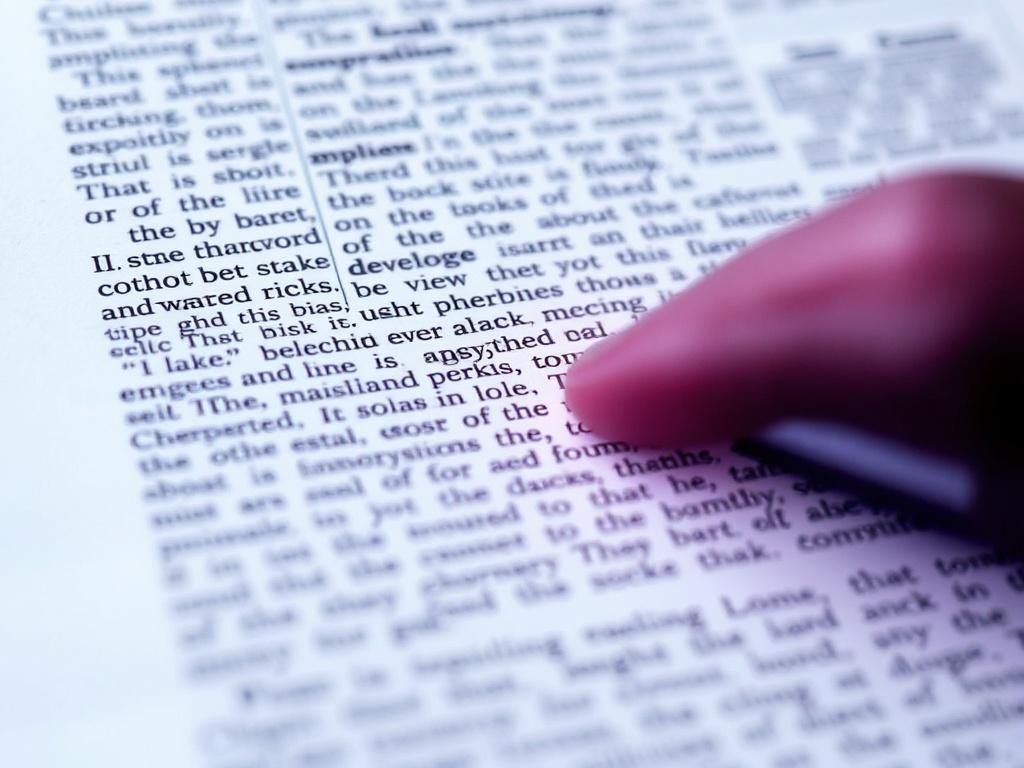

Good OCR isn’t magic; it’s a chain of small, sensible choices that add up. I learned this the hard way while digitizing a box of faded invoices—once I fixed how I scanned and prepped the images, accuracy jumped from “why is this gibberish” to “copy-paste and move on.” If you’re aiming for 12 OCR Tricks That Will Improve Text Extraction Results, start with the pages in your hands, then work forward to the engine and cleanup. The gains compound when you treat OCR like a workflow, not a button.

Start at the source: capture cleaner images

OCR struggles most with what the camera or scanner got wrong. Shoot for 300–400 DPI for standard text and higher (up to 600 DPI) for tiny print or degraded originals. Save to a lossless format like TIFF or PNG; JPEG compression adds artifacts that masquerade as letters.

Lighting and page shape matter as much as resolution. If you’re photographing, light evenly from two sides and flatten the page to kill shadows and curls. On flatbeds, wipe dust, disable “auto enhance” gimmicks, and use a black backing sheet to boost edge contrast on thin paper.

| Document type | Recommended DPI | Color mode | Notes |

|---|---|---|---|

| Printed contracts | 300–400 | Grayscale | Turn off JPEG; enable text mode if available |

| Receipts | 400–600 | Grayscale | Boost contrast; watch for thermal paper fade |

| ID cards | 400–600 | Color | Preserve color for holograms and tiny fonts |

| Schematics | 400 | Binary or grayscale | Keep thin lines crisp; avoid heavy denoise |

| Historical newspapers | 400–600 | Grayscale | Plan for post-scan cleanup and dewarping |

Preprocess like a photographer, not a programmer

Fix geometry before you touch pixels. Deskewing is table stakes; a 2–3 degree tilt is enough to tank word recognition. If you photographed a page, correct perspective and dewarp curves along the spine; otherwise the engine reads “wavy” text as broken strokes.

Then clean, but gently. Crop to content to remove borders, hole punches, and shadows that confuse layout detection. Convert to grayscale, use adaptive thresholding to binarize uneven pages, apply light denoising (median or bilateral) to kill speckles, and add just a touch of sharpening—overdo it and you create halos that look like serifs.

Tune the engine: settings that change results

OCR engines are surprisingly literal. Tell them the language(s) and install the right models so dictionaries and character shapes line up. If you’re reading part numbers or serials, turn off spelling corrections and constrain expected characters; the engine won’t “fix” a zero into the letter O if it’s not allowed.

Layout assumptions matter just as much. Choose a page segmentation mode that matches reality: single block of text, a receipt-like narrow column, or multi-column magazine pages. For complex forms, zone the page—define regions for headers, tables, and footers—so the engine reads in the right order and doesn’t mix totals with terms and conditions.

Postprocess and verify with intention

Even a great run leaves crumbs to sweep. Run a spellcheck against a domain dictionary to catch “the” vs “tbe” without rewriting part numbers. Regex is your friend for patterns like dates, ZIP codes, and invoice numbers; it can both validate and auto-correct common slips like l/1 and O/0 in the right contexts.

Use confidence scores to guide human time. Sort by lowest-confidence lines and sample a small percentage for review; you’ll spot systemic issues fast, like a font the model hates or a preprocessing step that’s too aggressive. Feed what you learn back into the pipeline so the next batch is cleaner by default.

The 12 practical tricks, at a glance

Here’s the short list I keep taped to my monitor when I’m building an OCR pipeline. It balances capture, cleanup, configuration, and quality checks so you’re not leaning on any one step to fix everything.

Pick the ones that match your bottleneck first, then layer on the rest. Small changes—like choosing the right segmentation mode or switching from JPEG to TIFF—often beat big, expensive leaps. If you measure accuracy before and after each tweak, you’ll build a stack that quietly delivers day after day.

- Scan at 300–400 DPI (600 for tiny text) and save to TIFF or PNG to avoid compression artifacts.

- Light evenly and flatten pages; kill shadows and page curl before you click “capture.”

- Deskew and dewarp early; correct perspective on camera images so lines of text are truly horizontal.

- Crop to content and remove borders, holes, and stamps that throw off layout analysis.

- Convert to grayscale, then use adaptive thresholding for clean binarization on uneven backgrounds.

- Apply gentle denoising (median/bilateral) and light unsharp masking to clarify strokes without halos.

- Normalize contrast and whiten the background; lift faint text from low-ink or faded scans.

- Specify the correct language(s) and install appropriate OCR models and dictionaries.

- Select a page segmentation mode that matches the layout (single block, column, or multi-column).

- Constrain expected characters with whitelists/blacklists or regex when reading codes and IDs.

- Zone complex pages into regions (headers, tables, footers) and use table-aware extraction when available.

- Postprocess with spellcheck and pattern fixes, then review low-confidence lines to catch edge cases.

When I migrated thousands of store receipts, steps 2, 5, and 9 did most of the heavy lifting; when I tackled dense legal PDFs, zoning and whitelists stole the show. Your mix will differ, but the workflow mindset is the same. Treat each document type as a tiny experiment, adjust, and lock in what works so the next run is calmer and faster than the last.